This is the second of two posts introducing new models that Latino Decisions will use in predicting Latino voter turnout along with the choice of candidate by those voters who do turn out to vote. In a previous post, we laid out a strategy for estimating Latino voter turnout. Here, we introduce and briefly explain our proprietary LD Vote Predict model, which allows us to estimate the proportion of Latino voters who will cast votes for each of the two Presidential candidates in major parties.

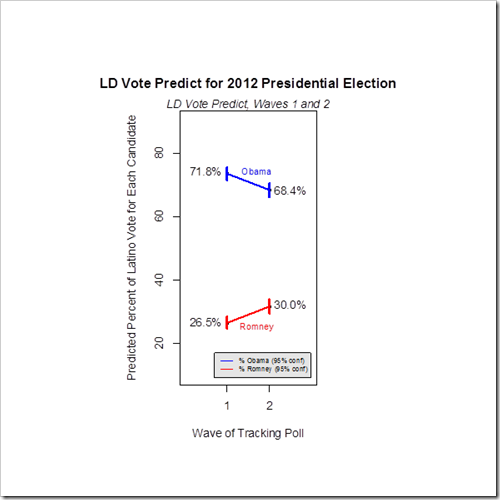

In our approach to estimating Latino vote choice in the upcoming presidential election, we take into account sources of uncertainty typically left unaddressed in major polls. This post will discuss our strategy for predicting Latino vote choice, which takes advantage of the impreMedia/Latino Decisions tracking poll to account for the impact of changes in the campaign. Our most recent estimate reveals that the Republican National Convention may have helped generate a slight bump for Romney, as his projected share of the Latino vote increased from 26.5% to 30% from the first to second week of the tracker.

Missing data plague all such surveys, and, while credible polling firms work to minimize this “missingness” at the survey design stage and mitigate its effects through adjustments for nonresponse, other sorts of uncertainty are more or less ignored as a rule. In particular:

- The population of interest is unclear ahead of time, since the set of those who will actually cast votes is hidden, not known with certainty even to the potential voters themselves.

- A not insignificant number of potential voters will at least claim to be undecided when surveyed.

With respect to the first of these issues associated with missing data, a distinction is usually drawn between registered voters and likely voters. In order to approximate the obscured target population of actual voters, the dominant approach is to consider stated preferences only among those considered likely to vote. The details used in identifying this group vary from pollster to pollster, but conventional wisdom would have us drop unlikely voters from consideration altogether. As pointed out in our previous post on predicting voter turnout, the conventional approach threatens to bias resulting estimates whenever the set of registered voters less likely to vote is large (typically around 40-50% of registered voters are eliminated from consideration) and when these less likely voters express much different preferences. This is especially problematic if, as in 2008, there is higher than expected turnout among those deemed not especially likely to vote.

With respect to the second issue, if one treats the votes of the undecided as not providing useful information about the election outcome, or if one is truly interested just in the vote choice of those who clearly indicate such a choice, it is reasonable to focus just on the votes of those who have seemingly made up their minds. And so, we are accustomed to seeing pre-election polls where percentages of support for the candidates add up to somewhere between 70% and 95% late in a race. Implicitly, this treats the undecided as a wildcard, so if the margin between two candidates is less than the percent undecided, analysts may either think of the outcome as anybody’s guess or, more commonly, ignore it altogether. However, if we are interested in generating more realistic estimates, we must take advantage of valuable information provided by the survey, yet typically ignored.

We are not completely blind to how the undecided will vote and, indeed survey respondents provide valuable information to pollsters, both in terms of their relatively likelihood of voting for each candidate (whether or not they themselves know it yet) and their probability of voting at all (since the undecided may be more likely to sit an election out.) Thus, our approach will be to use whatever insight we have into individuals’ likelihood of turning out to vote and how they will vote if they do wind up voting, while also providing an empirically-based assessment of our uncertainty.

The Modeling Strategy of LD Vote Predict

We will provide a more thorough methodological discussion of LD Vote Predict in a future post, but the basic strategy is as follows. We start from the perspective that what we wish to know is the proportion of Latino voters who shall cast votes for each of the two major candidates, among those who vote for one of these two candidates. In an election with a serious third party challenger, or if we are genuinely interested in knowing how many Latinos vote for less prominent candidates such as the Libertarian Gary Johnson or Green Party nominee Jill Stein, we would want to adjust our approach accordingly. However, in a plurality-based system, what is most relevant is the fraction of two-party vote going to each of the top two candidates across each state. So we estimate this proportion.

We treat individuals’ turnout and vote choice probabilistically, assuming that in advance of actual voting, each registered voter has a probability of turning out to vote, and a probability of voting for Romney (or Obama), given that they indeed cast a ballot. In order to estimate the proportion of Latinos voting for each candidate, we estimate these probabilities for each respondent using voting records and our model relating this information to outcomes, both available in the tracking poll.[1]

Among all registered Latino voters, we would thus estimate each person’s probability of voting for Obama as equivalent to their probability of voting at all (given their self-assessed likelihood of voting this year, and public records of their past voting) multiplied by the probability that they will vote for Obama if they do wind up voting. The average of these figures is our estimated proportion of Latinos voting for Obama (out of Latinos voting for either Obama or Romney). Our expressed uncertainty in predictions is based on the observed variability in simulated data, where we allow all parameters in the model to vary according to the precision of our parameter estimates.[2] In practice, we will do this each week for the sample of registered Latino voters in the current wave of our tracking poll, using weights to correct for bias due to non-response.

It is worth noting that, although conventional analysis of election polls assumes voters who say that they plan to vote for a candidate will indeed vote for that individual, we do not take for granted that such respondents are certain to vote in the way they say they will. We thus extend our approach for dealing with undecided voters to treat those who say they will vote for a particular candidate as voting probabilistically as well, based on a combination of their self-assessment and their stated partisan identity, enthusiasm, and past behavior. Those who say they will vote for a Democrat, are enthusiastic about the election, and always vote for Democrats will be nearly certain to do so this time, but others with more ambiguous preferences, or who provide conflicting cues, may not . In 2010, for example, only one-third of respondents claiming to identify most closely with the Republican Party, yet declaring that they were undecided in the upcoming Congressional elections, actually wound up voting for a Republican candidate. In order to make best use of the sometimes ambiguous information provided by survey respondents, and correctly ascertain uncertainty in our predictions, we take this into account in our modeling approach.

Estimates from Waves 1 and 2 of the Latino Decisions/impreMedia Tracking Poll, with LD Vote Predict

Most Recent LD Vote Predictions[3]:

In the figure above, we see the LD Vote Predict forecasted percentage for presidential candidates Obama and Romney after each of the first two waves of our tracking poll.

While Romney’s rise in our official poll numbers was well within the margin of error, our assertion of a distinguishable (albeit small) improvement this week. The candidates’ 95% credible intervals[4] in weeks one and two, indicated by short vertical lines in the figure above, are non-overlapping and suggest that Mitt Romney benefited from a modest 3.5% (see comparison below) increase in projected Latino vote share, though this figure could plausibly be around three percentage points higher or lower. This does not get Romney within striking distance of the 38% his campaign identifies as its magic number for Latino vote share.

Wave 1: 73.5% (71.5%, 75.3%) Obama

26.5% (24.7%, 28.5%) Romney

Wave 2: 68.4% (66.1%, 70.5%) Obama

31.6% (29.5%, 33.9%) Romney

Please check back to our blog as we regularly update the LD Vote Predict and LD Turnout Predict estimates. We will also address the modeling strategy in greater detail in an upcoming methodological discussion.

Justin H. Gross is the Chief Statistician for Latino Decisions and an Assistant Professor of Political Science at the University of North Carolina.

[1] As a technical aside, we use a Bayesian model. One advantage is that this allows us to simulate from a range of possible behavior and a range of plausible values for the parameters in our model, weighted by how likely these values are, given the data we have at hand. This automatically conveys the uncertainty inherent in drawing conclusions from our limited sample. As but one example, suppose there are only twelve respondents to previous tracking polls with a particular value of X, a proxy variable for vote choice that combines information relevant to our prediction. Suppose further that of these twelve people with a shared value of X, call it x*, eight wound up voting for a Democrat, while four vote for a Republican candidate. We would have more confidence in assessing voting probabilities for another voter with X=x* if we had instead seen 80 such voters choose a Democrat and 40 choose a Republican. Our Bayesian simulations take this into account, as we sample from plausible values of Pr(vote Republican| X=x*), the probability of voting Republican given X=x*, in proportion to how likely each possible value of this parameter is, given the observations already made. The same sort of logic applies to all stages of our estimation procedure.

[2] Technically, we are sampling from the posterior distribution over these parameter values.

[3] Point estimates are the posterior median values, and credible intervals represent the 2.5th and 97.5th percentile of the posterior distribution (a 95% credible interval)

[4] Credible intervals are the Bayesian versions of confidence intervals; in contrast to conventional confidence intervals, it is correct to interpret a 95% credible interval as implying that, according to our data, there is a 95% probability that the candidate’s support falls in this range of values.